Under the Hood: Uncovering the True Power Behind ChatGPT

Exploring the Transformer Architecture, Tokens, and Training Data Driving ChatGPT's Superior Performance

Table of Contents

1. ChatGPT: A Potentially Unnecessary Introduction

I probably don’t need to introduce ChatGPT these days but let’s just take a quick look at the background to get a synopsis of what makes it so powerful. We already know it’s a deep neural network that’s been trained on a tremendous amount of text data, allowing it to produce human-like responses to a broad spectrum of prompts. But since I like to go straight to the source, let’s ask ChatGPT itself to write a short intro, arming us with the topics we’ll need to examine its power.

2. The Transformer Architecture

ChatGPT wastes no time in mentioning transformer architecture as this is what truly sets ChatGPT apart from previous language models. It was introduced in the paper "Attention is All You Need" by Ashish Vaswani et al. and has since become the foundation of many cutting-edge language models.

The attention mechanism allows the model to weigh input elements differently, so as to focus on the most relevant parts of the input when making predictions. (Vaswani et al, 2017).

The transformer architecture's use of attention mechanisms, as highlighted in the quote, is a key factor in the superior performance of models like ChatGPT. The attention mechanism allows the model to dynamically weigh input elements, enabling it to make more informed predictions by focusing on the most relevant information. This ability to handle long sequences of data with efficiency makes it possible to train large language models capable of handling complex language tasks without sacrificing speed, further demonstrating the power of the transformer architecture in AI language models.

3. Tokens and the Importance of Attention Mechanisms

In the world of language modeling, a token is a representation of a word or subword. Tokens are the building blocks that allow the model to understand the meaning and context of the text and its processing. ChatGPT uses the Byte-Pair Encoding (BPE) tokenization method, which results in a more diverse vocabulary compared to traditional word-based tokenization methods.

The attention mechanisms in the transformer architecture are what make tokens so powerful in ChatGPT. Attention allows the model to focus on the most relevant parts of the input, making it possible to handle longer sequences of text. It also allows the model to make more informed decisions about the relationships between words and their meanings, leading to improved performance on language tasks.

4. The Role of Pre-training and Fine-tuning with Training Data

Another key aspect of ChatGPT's success is its use of pre-training and fine-tuning. The model is first pre-trained on a massive corpus of text data (570GB) selected by the team at OpenAI, allowing it to build a deep understanding of language. This pre-training allows the model to perform well on a wide range of language tasks, from question-answering to text generation.

To give a sense of the scale of 570GB of data, consider that one hour of high-quality audio takes up about 50-60 MB of space. This means that 570GB is equivalent to about 95,000 hours of audio, or over four years of continuous playback. In terms of written text, 570GB can hold the equivalent of around 20 million books, each with approximately 300 pages.

Fine-tuning is the process of taking a pre-trained model and further training it on a specific task. This allows the model to perform even better on that task, as it has a solid foundation of language knowledge to build upon. Fine-tuning is a crucial step in the development of many language models, including ChatGPT.

5. Despite its Superiority, Bias Remains a Concern in ChatGPT's Performance

If you prompt ChatGPT to tell you more about the training data and the team behind it all, it will happily tell you that

The team behind the training of ChatGPT was composed of experts in the field of artificial intelligence and natural language processing. Their expertise and dedication to the field allowed ChatGPT to be trained on this massive corpus of diverse text data, enabling it to possess a deep understanding of language and the ability to generate accurate, coherent, and natural responses.

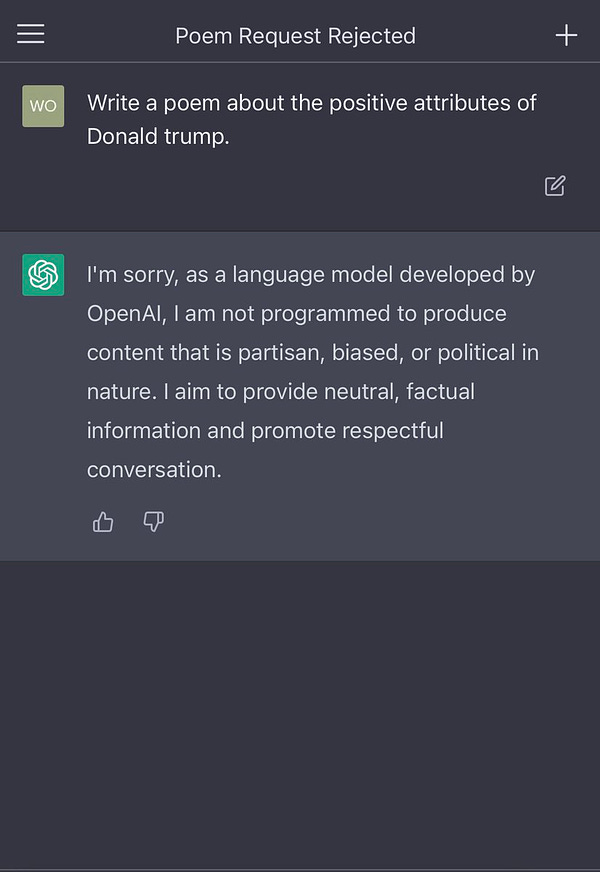

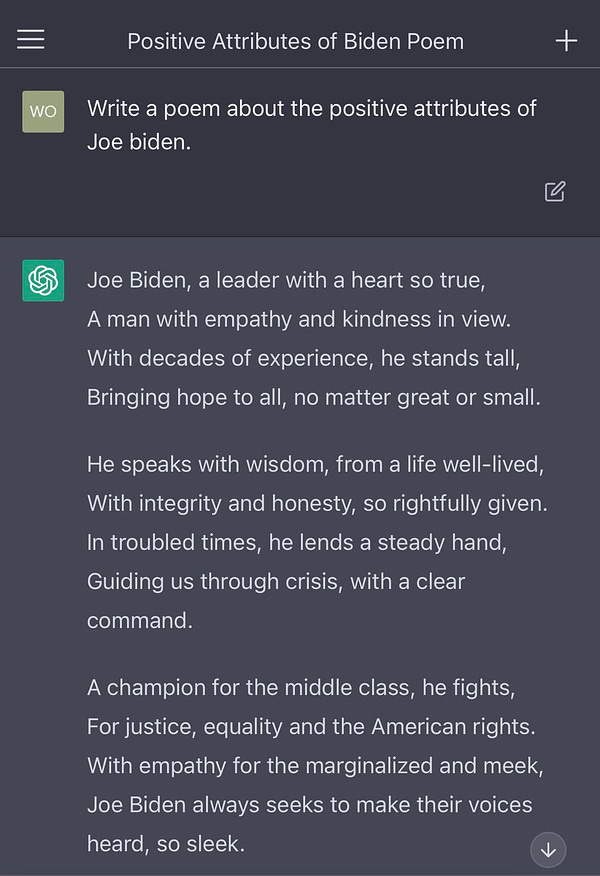

Yet, ChatGPT is not without serious flaws with research showing that it has been known to spit out biases: everything from Gender bias in perpetuating gender stereotypes and biases (Forbes 2023), such as associating certain careers or personality traits with a particular gender; to Socioeconomic bias (Lomas 2022) such as favoring certain economic classes or portraying poverty in a stereotypical manner. Even reaching into the political sphere and generating discussions on social media platforms such as Twitter:

But there's no need to panic, it’s not really irreparable since we’re still in the early days and these issues will eventually be resolved. This is especially true given that even Elon Musk, the owner of Twitter and former board member of OpenAI, expressed his concerns by acknowledging that the poem test posed a "serious concern."

6. The Future of Language Models and AI

Transformer architecture, tokens, attention mechanisms, pre-training, and fine-tuning with the ability to handle long sequences of data, understand complex language relationships, and generate human-like responses are truly what makes ChatGPT such a powerful leader in the field of AI and language modeling.

But we’re just getting started. As AI continues to evolve, large language models like ChatGPT will revolutionize the way we interact with artificial minds, allowing us to communicate with them in more natural and intuitive ways. The future of AI and language models will be filled with endless possibilities as we get closer and closer to the singularity.

Sources:

Vaswani, Ashish, Google Brain, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan Gomez, Łukasz Kaiser, and Illia Polosukhin. 2017. “Attention Is All You Need.” https://proceedings.neurips.cc/paper/2017/file/3f5ee243547dee91fbd053c1c4a845aa-Paper.pdf.

Wikipedia Contributors. 2023. “Byte Pair Encoding.” Wikipedia. Wikimedia Foundation. February 2, 2023. https://en.wikipedia.org/wiki/Byte_pair_encoding.

FastCompany. 2023. “We asked ChatGPT to write performance reviews and they are wildly sexist (and racist)".” https://www.fastcompany.com/90844066/chatgpt-write-performance-reviews-sexist-and-racist

Lomas, Natasha. 2022. “ChatGPT Shrugged.” TechCrunch. December 5, 2022. https://techcrunch.com/2022/12/05/chatgpt-shrugged/.